It gave me the basic structure that includes the number of data points, latitudes and longitudes of the data points and other essential details I needed. All I had to do was upload the netcdf data file directly into panoply and boom. Matlab was more powerful with the actual analysis but not with the pre processing.

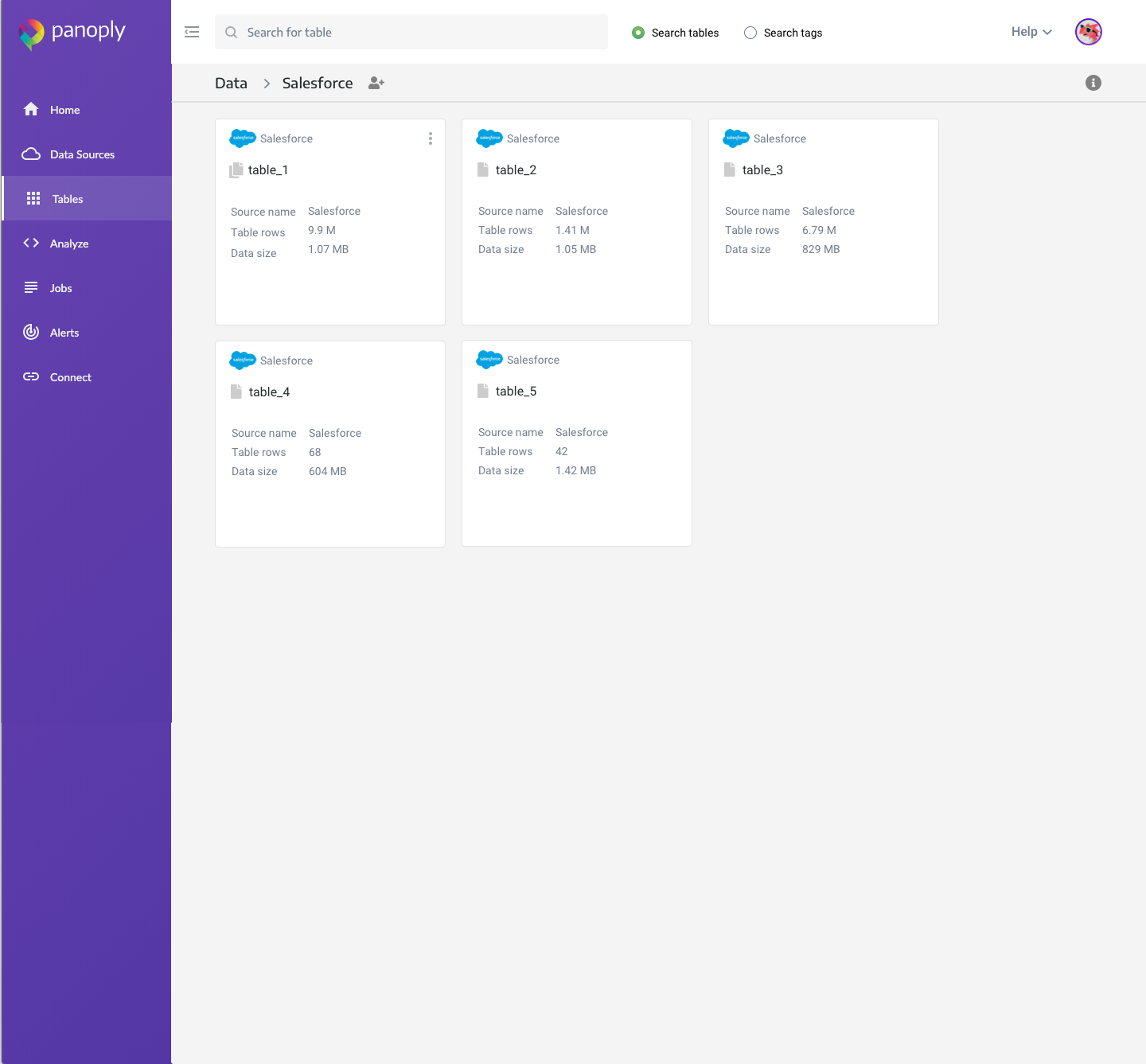

However, I wanted to exclude Fortran and have a more convenient way of knowing the basic data structure. For this I have used a combination of F77 and Matlab in the past. Panoply pricing code#Before I could write a code in Matlab to analyze the data, I needed to know the data structure. I was trying to analyze a bunch of climate variables that were in netcdf format. I was introduced to Panoply by a colleague. This is a great tool to have for such data structure. Panoply pricing iso#For APIs, the most common timestamp syntax is ISO 8601 format: yyyy-mm-ddThh:mm:ss.sssZ.Comments: I am working with Geographical data that has latitudes, longitudes and a variable I am trying to analyze. But in other data sources, like Postgres or Mysql, we really can't make any assumption about the data, unless you explicitly define an incremental key from that data source.įor database and file system sources, you can enter anything as the incremental key, but certain types of fields are most common, such as timestamps or incremental IDs.

For example, using Amazon S3, we can use the ModifiedDate to avoid re-reading files that were already processed and weren't modified. In some data sources, this is simple to reason out and set up. Then, it uses this value to skip over all rows below that point, assumed to be unchanged since the last time the data source was consumed. Whenever Panoply reads a data source, it first reads the last value stored for that incremental key. These attributes, once configured, can be used by Panoply to skip over all unchanged rows in the data source, and only fetch the new or updated rows on every iteration, thus reducing the processing time from hours to minutes or seconds. Simple examples are attributes like modification_date. Panoply pricing update#An incremental key is an attribute of the collected data that can be reliably assumed to indicate the last update point for the rows in that data source. Incremental Keysįor data sources that do not have the default behavior built in an incremental key can be defined by the user. In most API sources, incremental loads are already included, so there's no need to make any schema changes in your data source. For these data sources, Panoply supports the ability to define an incremental key. With a fast-growing data set, reading the entire source might take hours, most of which is spent re-reading unchanged data. With larger data sets, such as Shopify or Stripe, this can quickly become unfeasible. In those cases, the data set is small enough to be fully consumed in just a few minutes. For data sources with small data sets, that's fine. This key is then used to identify the most up-to-date rows, saving vast amounts of time in the collection process and improving performance.įor API sources, Panoply's default behavior collects the entire data set from the source every time they are collected.

On others, users can define an incremental key when setting up the Advanced Settings. For some data sources, this is the default behavior. On data sources that support it, Panoply uses incremental loads to pull only the data that was updated or added since the last pull and then updates the rows that have been changed.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed